The community-vetted suite of student learning outcomes for discipline-specific and professional competencies can be used as the basis for geoscience program assessment.

Assessment of undergraduate geoscience curricula is the data-driven measurement of a program’s effectiveness in supporting student learning across a set of critical areas (Mogk, 2014). A learning assessment strategy is based on the desired student learning outcomes in individual courses and across a curriculum. The first step in developing an effective and tractable learning assessment protocol is identifying the key student learning outcomes. At the class level, instructors should have both course learning goals and related learning objectives for each class session that are matched with appropriate assessments. At the curriculum level, faculty need to agree on the desired overall learning outcomes for the students and develop appropriate assessments. If some desired learning outcomes cannot be met by their program, programs should provide students either co-curricular activities or information on external resources to meet these outcomes.

The geosciences, unlike engineering which must follow the ABET accrediting criteria, do not have externally mandated priorities for the discipline. The consensus findings from the Summit efforts provide an externally-vetted suite of student learning outcomes spanning the range of discipline-specific concepts, professional skills, and competencies that can be used as the foundation for assessment plans for undergraduate geoscience programs nationally.

Departments that use the backwards design or similar approaches to revise their curriculum will develop a matrix of student learning outcomes that provides a blueprint for assessment. Additionally, when colleagues are aware of prerequisite requirements, they can communicate with peers to help with assessment of individual course effectiveness. Aside from disciplinary, program, or department standards, student learning outcomes for any degree program need to align with institution-level learning outcomes, both to meet institutional needs as well as to enhance the efficiency of the data collection and analysis effort, which can become highly onerous if not approached strategically.

A major challenge to any successful geoscience curricular assessment effort is finding reasonable means for measuring disciplinary, as well as non-disciplinary, professional skills, such as teamwork, critical thinking, written, and oral communication. Several very useful rubrics have already been developed and are readily available for most the competencies. For example, the American Association of Colleges and Universities (AACU) VALUE project1 has developed an extensive series of assessment rubrics for these kinds of competencies: rubrics are provided for inquiry, critical and creative thinking, oral and written communication, teamwork, problem solving, reading, quantitative and information literacy, integrative and applied learning, and lifelong learning. The National Association of Colleges and Employers (NACE) also provides useful student learning rubrics and career assessment resources. Additionally, faculty can assess aggregate student learning outcomes for an individual course using a SALG -Student Assessment of Their Learning Gains survey2, which is customizable and allows faculty to tailor questions to their courses. The limitation, however, is that it relies on student self-reporting.

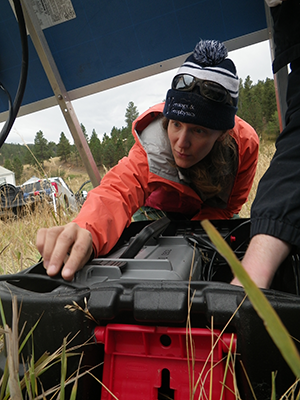

With reliable and usable rubrics in hand, the next challenge facing departments is identifying suitable student work products to review for such competencies. The common advice is to look at “capstone” curricular experiences to find assignments and student work products reflecting the full curricular experience. The traditional capstone experience in geology programs has long been geological field camp courses, which require students to use the information, concepts, and skills they learned in classes to solve field-related geoscience problems (Box 6.1). Although field courses are a valuable assessment tool for many concepts, skills, and competencies, other capstone experiences may provide more suitable measures for many professional and computational/quantitative/data analysis skills. Departments need to examine the assignments and student experiences across their degree programs that allow for the review and analysis of all the desired skills and competencies. This review can be augmented by using student e-portfolios as described below.

Box 6.1: Capstone Field Courses/Camps

What field experiences should aim to accomplish. These field course/camps use most of the skills needed while integrating the concepts learned and allow student to demonstrate their competencies.

Learning Objectives & Assessments for Field Experiences, from NAGT workshop led by Kurtis Burmeister and Laura Rademacher3

-

Design a field strategy to collect or select data to answer a geologic question

-

Collect accurate and sufficient data on field relationships and record these using disciplinary conventions (field notes, map symbols, etc.)

-

Synthesize geologic data, and integrate with core concepts and skills, into a cohesive spatial and temporal scientific interpretation

-

Interpret Earth systems and past/current/future processes using multiple lines of spatially distributed evidence

-

Develop an argument that is consistent with available evidence and uncertainty

-

Communicate clearly using written, verbal, and/or visual media (e.g., maps, cross-sections, reports) with discipline-specific terminology appropriate to your audience

-

Work effectively, independently, and collaboratively (e.g., commitment, reliability, leadership, open for advice, channels of communication, supportive, inclusive)

-

Reflect on personal strengths and challenges (e.g., in study design, safety, time management, independent, and collaborative work)

-

Demonstrate behaviors expected of professional geoscientists (e.g., time management, work preparation, collegiality, health and safety, ethics)

The Association of State Boards of Geology (ASBOG), which administers the Fundamentals of Geology examination as part of the professional licensure process for geologists in 34 states, supports the use of this examination as an exit assessment for graduating students. In Mississippi, all graduating seniors in geology programs take the exam, and it is also required by the University of West Georgia (ASBOG, 2016). Recent state-level initiatives to establish “Geologist in Training” certifications based on successful completion of the ASBOG Fundamentals examination provides new incentives for graduating seniors to take the exam. ASBOG also reports summary results to departments. As regional accreditors (led by the Southeastern Association of Colleges and Schools: SACS) are beginning to require “external measures” in proposed assessment plans, the use of longstanding profession-oriented instruments like the ASBOG Fundamentals exam begin to look more attractive. An obvious challenge with use of the ASBOG exam is cost, as students, or their department, must cover the fee.

One consideration about the ASBOG Fundamentals exam is that it focuses on conceptual geoscience understanding, as opposed to the mastery of important geoscience and other skills (like mapping, field/laboratory data collection and interpretation, data analytics, etc.). Also, the content coverage is comparatively traditional4, so topics such as Earth system science and climate change are not directly addressed, and there is a focus on the more applied geosciences, such as hydrogeology and engineering geology. Aside from the ASBOG exam, a different range of validated assessment instruments (i.e., test questions and student assignments that have been validated for assessment use) are available from the Geoscience Concept Inventory5 (Libarkin and Anderson, 2006). Alternatively, Conceptests6 and Geoscience Literacy Exam questions7 represent assessments that can be applied in introductory courses. Concept Sketches (Johnson and Reynolds, 2005) provide a means for quick rubric-based assessment of visual conceptual content that is unique to the geosciences.

Longitudinal, post-graduation assessment measures can provide some of the best evidence for the effectiveness of a degree program. Tracking the professional progress of bachelor’s recipients through “check-in” surveys, 1–5 years post-graduation, can provide useful perspectives on their academic preparation and any unanticipated skill needs. The challenge in gathering such data is tracking one’s graduates, which increases in difficulty with time since their completion. Successful university alumni and development offices have their own means and motivations to track recent graduates and may be able to assist, as can active alumni society chapters. For example, the University of South Florida Geology Alumni Society maintains a professional email network and adds all the current students who participate in their outreach activities. Alternatively, using professional social networking sites (LinkedIn, Facebook groups) offers a way to create and maintain contact with students both pre- and post-graduation. Holding alumni receptions at major geoscience conferences is also a way to keep in touch with graduates.

Another indirect, but useful, resource is engaging with employer partners as members of program advisory boards, or through surveys and interviews. Employers who hire many of a program’s bachelor’s recipients see the level of preparation and the nature of the professional skills developed in the program and can provide feedback on the strengths of a program, identify gaps that may exist, and note issues graduates may be consistently having in areas such as communication, teamwork, and professional acumen. Access to this type of assessment data involves developing and nurturing relationships with local and regional employers, a task that is often a sidebar in the service and community engagement activities of geoscience departments. Similarly, faculty colleagues at graduate schools can provide insight into how prepared student graduates are for continued education.

E-portfolios as a Student Tool for Documenting and Self-assessing Development

Students can participate in self-assessment and document their development through their undergraduate program. They benefit from a solid understanding of their own progress with respect to the linkages between courses and the rationale for both geoscience in-course activities and the necessity of supporting courses. Students can ‘validate’ their understanding of concepts, skills, and competences, starting with class exposure at the basic level, application in a dedicated assignment being the next step, followed by independent application of the skill to a problem showing a fundamental level of mastery. This tiered approach is often used within corporate development processes. Documenting achievements is a core challenge with competency-based approaches. Courses, as a traditional unit, are a relevant benchmark even in a competency-based perspective, especially if the intended learning outcomes are clearly identified to students so they can recognize them, record them, and consider how they integrate into future learning experiences. Likewise, as additional co-curricular activities are integrated into the student experience, these non-traditional approaches also need to find a way into the demonstrated record of the student.

One solution for facilitating student demonstration of achievement across a spectrum of modes is the use of an individual digital portfolio of work, transcripts, and achievements, usually referred to as an ePortfolio (e.g., Cribb, 2018). These ePortfolios allow students craft a professional identity in a digital format similar to a website or blog. Three general types of ePortfolios are widely recognized:

-

A Working portfolio, or “holding tank”, where students are building the portfolio in iterative cycles of creating, reflecting, and revising (also called integrated learning or developmental portfolios). The act of creating a portfolio is itself a learning experience for the student and may involve working with a mentor during its development.

-

The Showcase or Display portfolio is where students allow others to view their portfolio to demonstrate their achievements and evidence of mastery, either collectively to potential employers, graduate schools, or for specific projects.

-

The Documentation or Directed Portfolio is generally used for assessment and is more structured around program outcomes. This portfolio facilitates student mapping of work products, achievements, and other accomplishments to the programs intended outcomes.

Departments and programs can use these collectively as a means of assessment. Extensive literature on ePortfolios exists (e.g., Danielson and Abrutyn, 1997; Matthews-DeNatele, 2013; Barrett, 20108), and case studies demonstrate a correlation between the use of ePortfolios and student engagement in learning, retention, success, and catalyzing learning-centered institutional change (Eynon and Gambino, 2017, 2018).

Student ePortfolios can support evaluation of how well a geoscience program’s activities are meeting intended outcomes, especially in the complicated dance between the formal educational process controlled by the department, and the needed co-curricular activities for which there is less departmental influence. This situation was well described by Matthews-DeNatele (2013) as ePortfolios “living out the tension between data-driven strategies for verification and accountability, and personalized learning that is greater than the sum of its parts, and thus difficult to measure.”

The ePortfolio can become a permanent, dynamic record of the individual’s career, from formal education through their lifetime of work and learning. This records the continued development of their individual professional development plan and becomes a reference for completing more traditional records of achievements, such as resumes and curricula vita.

Recommendations:

-

Understand and define what constitutes the varied modes of success of your program — post-baccalaureate student outcomes, curricular outcomes, and individual course outcomes

-

Have clear learning outcomes and matching assessments within individual courses

-

Develop an effective and tractable learning assessment protocol for your program that uses key student learning outcomes and incorporates specific discipline, program/departmental, and university goals

-

Use external assessment methods, such as AACU rubrics, ASBOG exams that reflects employer needs, ABET for frameworks, and post-graduation longitudinal surveys to understand skills gaps in the program

-

Leverage modern student self-assessment tools, such as e-portfolios, the National Association of Colleges and Employers (NACE) student learning rubrics, and career assessment resources, etc., as a collaborative opportunity between students and the program on aggregate outcomes